Agents are already live on Solana, and they don’t wait for approval. Some mirror KOL wallets in the same slot. Others rebalance positions on Kamino and Drift, launch tokens, and route liquidity end-to-end. No human in the loop, no pause between signal and execution.

The open question isn’t “should agents run on-chain.” It’s whether your stack can keep up when they do.

This guide breaks the system down to parts that matter in production:

If your data is late or your submissions miss the slot, the model quality doesn’t save you. Execution quality starts below the agent, in the path between your process and the validator.

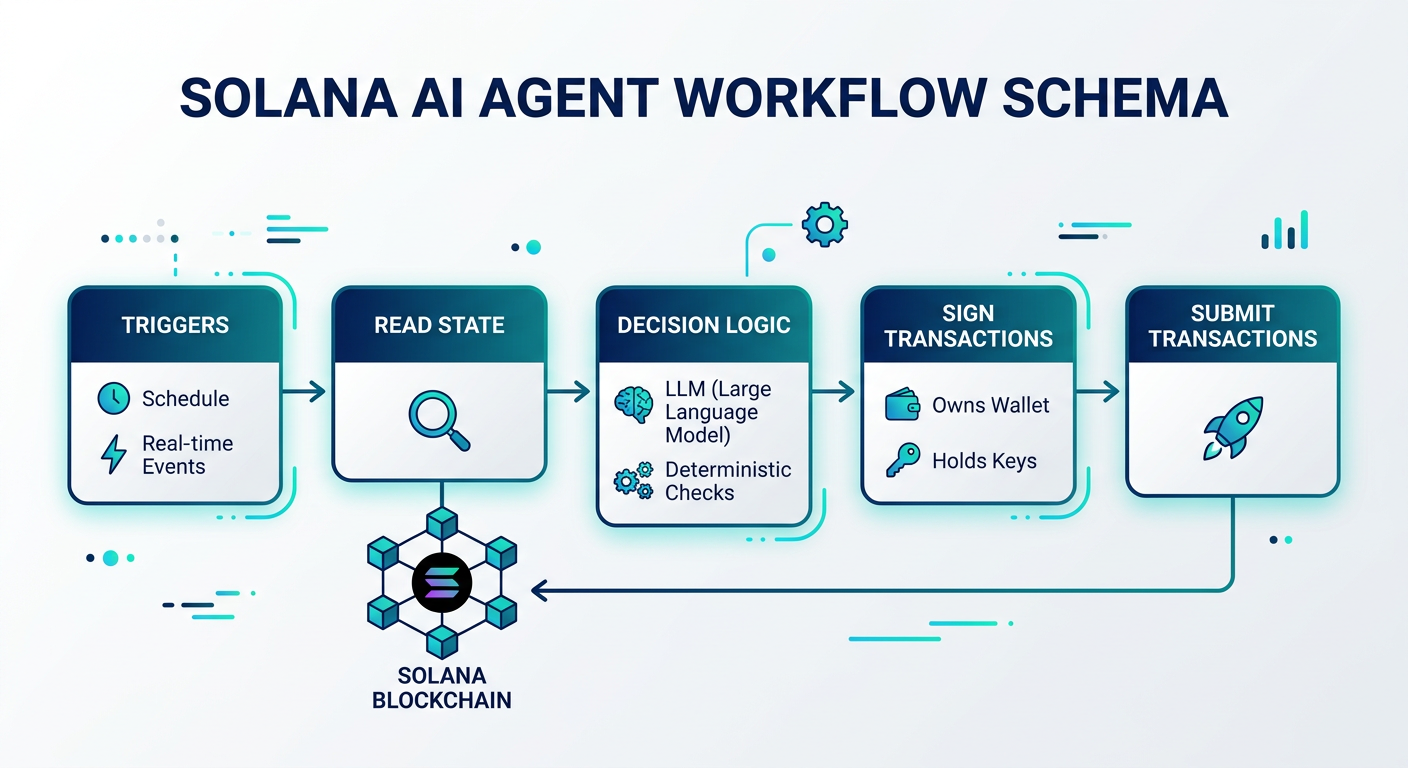

An AI agent is a loop with teeth: observe, decide, act, repeat. No human prompt in the middle, no “run once and exit.”

On-chain, the loop looks like this. The agent reads state, runs decision logic (often an LLM plus deterministic checks), signs transactions, and submits them. It owns a wallet, holds keys, and triggers off a schedule or off real-time events.

The difference from a classic trading bot lies in the decision layer. Bots execute prewritten rules. Agents interpret context, shift tactics, and reason across multiple inputs in one pass: market data, protocol state, wallet flows, social signals.

Solana turns this into an engineering problem, not a UX problem. Slots target ~400ms (often 400–600ms), and blockhashes expire in roughly 60–90 seconds, so stale reads and slow signing paths fail fast. Fees usually amount to fractions of a cent, enabling high-frequency looping.

Every Solana AI agent, no matter its complexity, operates on three layers. Knowing their interactions is essential for all architecture choices.

This is the LLM brain—GPT, Claude, or open-source model—managed by a framework that handles memory, goals, and decision-making. It interprets inputs like price feeds, wallet activity, social data, and determines the next action.

This layer connects the agent's decisions to the blockchain, including the RPC node for reading state and submitting transactions, the agent framework's tools, and protocol SDKs. It converts intent into signed, broadcast-ready TXs. The Solana-specific SDKs:

| Tool / Framework | Description | Key features & Integrations | Resources |

|---|---|---|---|

|

SendAI's

Solana Agent Kit |

A collection of tools for building agents on Solana. | Compatible with Eliza, Langchain, and Vercel AI SDK; includes built-in Solana utilities and NLP capabilities. | Documentation |

| Eliza Framework | A platform combining datalayer, LLM, and agents. | Dedicated Solana plugin; extensible architecture; easy integration for Twitter (X), Telegram, or Discord bots. | GitHub repo |

| GOAT Toolkit | A comprehensive development toolkit for AI agents. | Cross-chain compatibility and standardized tool sets for agents. | GitHub repo |

| Rig Framework | A native Rust implementation for building agents. | High performance; ideal for trading bots; integrates with Listen.rs for Jito bundles and transaction monitoring. | GitHub repo / Listen.rs repo |

Three tools are currently leading the way in building Solana agents, each playing a unique role in supporting different layers of the architecture.

ElizaOS

ElizaOS, from the ai16z team, sits on the reasoning side. It runs the agent loop, keeps memory, enforces personality, and wires into X and Discord. In practice, you see it driving agents that trade and talk at the same time—posting commentary while executing swaps. It decides when to act and why, not how to serialize an instruction.

GOAT SDK (Great Onchain Agent Toolkit)

GOAT takes the opposite job. It gives an LLM hands on-chain without exposing the mess underneath. You define tools like jupiter_swap or kamino_lend with typed inputs. GOAT injects balances, prices, and protocol state into the prompt, then builds and signs transactions. Any tool-calling model plugs in and starts acting on Solana without learning its instruction formats.

Solana Agent Kit

The Solana Agent Kit is the execution layer engineers reach for when they want fewer footguns. It wraps JSON-RPC and protocol SDKs into one-call actions. A swap stops being 50 lines of TypeScript and becomes a function call. Under the hood, it handles transaction assembly, Address Lookup Tables, and signing paths.

A concrete constraint most teams hit: Solana transactions cap at ~1232 bytes, which forces batching and ALT usage for complex flows. That’s why these abstractions exist.

The production pattern is boring on purpose:

This is the only layer that matters when money moves, the one that enforces reality. That’s the part with programs, keypairs, and consensus.

Every transaction gets signed with real cryptography, executed as a single atomic unit, and finalized fast enough that you don’t have time to second-guess it. If your trade lands, it lands. No Schrödinger’s transaction hanging around.

How these layers work together

Say you’re running an arbitrage bot chasing yield. It notices SOL trading at $150 on Orca and $152 on Raydium. That’s not insight—that’s table stakes. The interesting part is what happens next.

First, something decides the trade is worth taking. That’s your intelligence. It answers the only question that matters at this stage: “Is this worth the risk and fees?”

Then you need a way to express that decision in the chain's language. The connectivity does the grunt work—builds the transaction, routes it through something like Jupiter, and hands it off to an RPC endpoint without messing up serialization or routing.

Finally, Solana takes over. The transaction gets signed, broadcast, and pushed through consensus. No partial fills, no race conditions leaking state. One slot later—about 400 milliseconds—you either captured the spread or you didn’t.

The top layer tells you why to act. The middle layer figures out how to act. And Solana is where the action becomes irreversible reality.

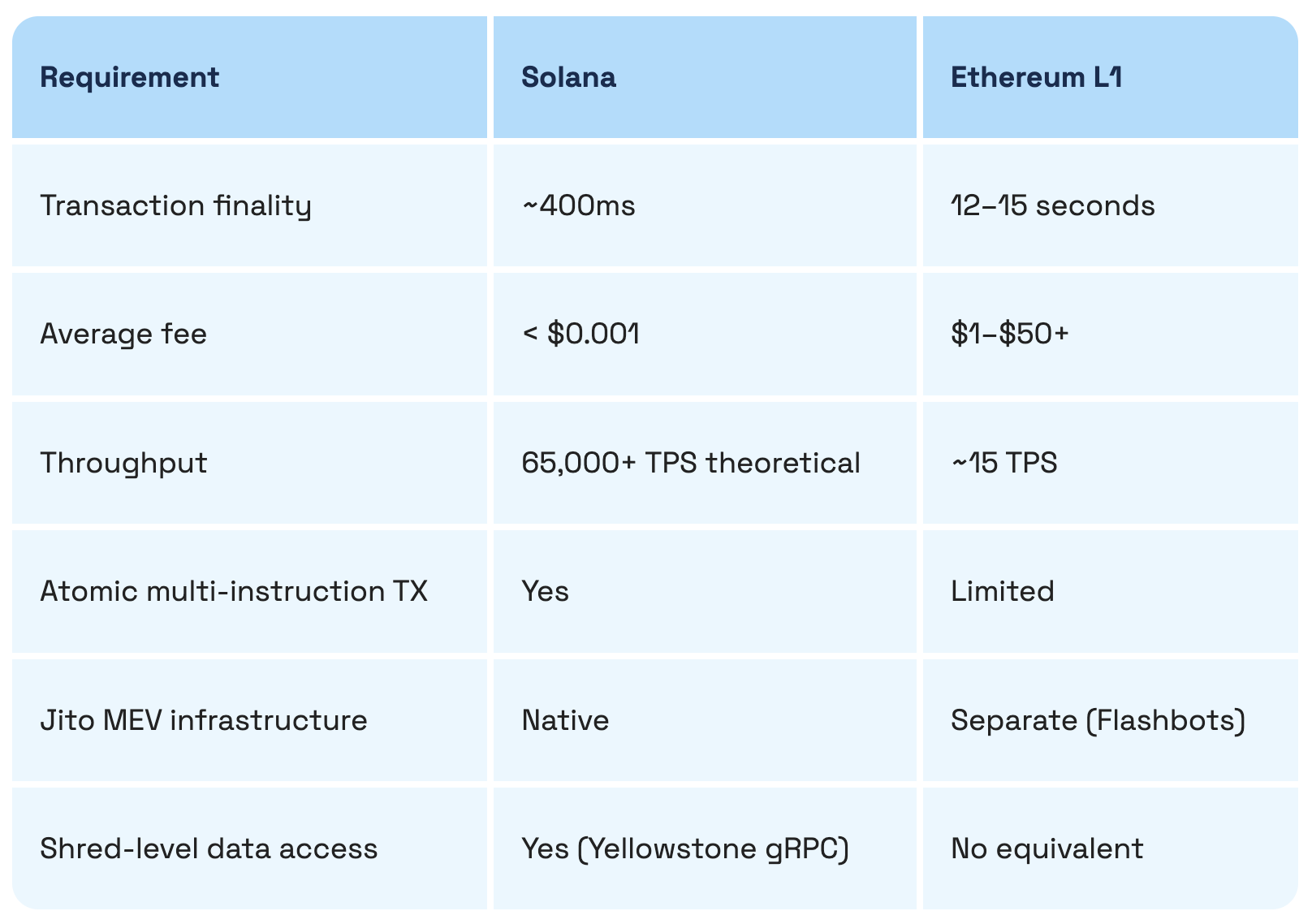

Choosing a chain is an infrastructure decision, not philosophical; Solana's properties match autonomous agents' needs.

If you’ve ever tried to run a high-frequency strategy on Ethereum, you learn the hard way that gas is not a rounding error—it’s the business model.

Rebalance a portfolio 50 times a day, and you’re lighting hundreds of dollars on fire just to stay in the game. Move the same logic to Solana, and you’re under a dollar. That’s not optimization. That’s the difference between “viable system” and “nice idea on paper.”

There’s another detail people miss until it bites them: composition.

On Solana, you don’t stitch together state across multiple transactions and hope nothing breaks in between. You pack instructions—borrow, swap, repay—into one atomic transaction. Either all of it goes through, or none of it does. That single property is what makes flash-loan arbitrage and tightly coupled DeFi strategies work without duct tape.

So what do people build when the constraints loosen? You start seeing patterns repeat:

At some point, the taxonomy breaks down.

The difference between a “social agent” and a “precision trading bot” isn’t philosophical. It comes down to latency tolerance and how much control you want over transaction construction. Everything else is an implementation detail.

The quickest way to get a functional agent is by using the Solana Agent Kit with a TypeScript runtime. This is the basic architecture.

Prerequisites:

It’s time to have a meal and watch YouTube 🙂

Install dependencies:

npm install solana-agent-kit @solana/web3.js @langchain/openai Initialize the agent:

import { SolanaAgentKit, createSolanaTools } from "solana-agent-kit";

import { ChatOpenAI } from "@langchain/openai";

import { createReactAgent } from "@langchain/langgraph/prebuilt";

const agent = new SolanaAgentKit(

process.env.SOLANA_PRIVATE_KEY!,

process.env.RPC_URL!, // Your RPC endpoint goes here

{ OPENAI_API_KEY: process.env.OPENAI_API_KEY! }

);

const tools = createSolanaTools(agent);

const llm = new ChatOpenAI({ model: "gpt-4o", temperature: 0 });

const solanaAgent = createReactAgent({ llm, tools });Execute a swap:

const result = await solanaAgent.invoke({

messages: [{

role: "user",

content: "Swap 0.1 SOL for USDC using Jupiter. Use a 0.5% slippage tolerance."

}]

});

console.log(result.messages.at(-1)?.content);This simple full loop involves the LLM receiving an instruction, selecting the trade tool from the Solana Agent Kit, constructing the Jupiter swap transaction, signing it with the agent's keypair, and submitting it to your RPC endpoint.

If your agent leaks alpha, you lose money. If it leaks its private key, you lose everything. So don’t stick the key in an environment variable on a shared box and call it “good enough.” That’s how you end up debugging an empty wallet at 3 a.m.

Use a proper KMS—AWS KMS, HashiCorp Vault, Google Cloud KMS—and fetch the key at runtime. The signing happens in memory, and the raw material never hits disk.

Once real capital is involved, tighten the security loop further.

Have the agent propose transactions, not blindly execute them. Route anything above a defined threshold through a second signer—a hardware wallet or an isolated approval service. You trade a bit of latency for a hard cap on blast radius. That’s the difference between a system that fails gracefully and one that wipes itself out in a single bad call.

The Agent Kit covers most cases. You get transactions built, routed, and executed without thinking about every byte. For a lot of teams, that’s enough to ship and start learning from production.

But the moment you care about same-slot execution or shaving milliseconds off an arb path, you hit the ceiling. Copy-trading in the same slot, or calling Raydium’s AMM directly instead of going through Jupiter, forces you down a level. Now you’re in @solana/web3.js, assembling TransactionInstruction objects by hand, deciding instruction order, tuning compute unit limits, and packaging Jito bundles yourself.

You get control. You also get the bill: weeks of engineering time and ongoing upkeep every time a protocol shifts its interface.

Start with the Agent Kit. Run it in production. Measure where latency or execution quality drops off. Then replace only the parts that block your strategy. Everything else stays abstracted, and your team keeps moving.

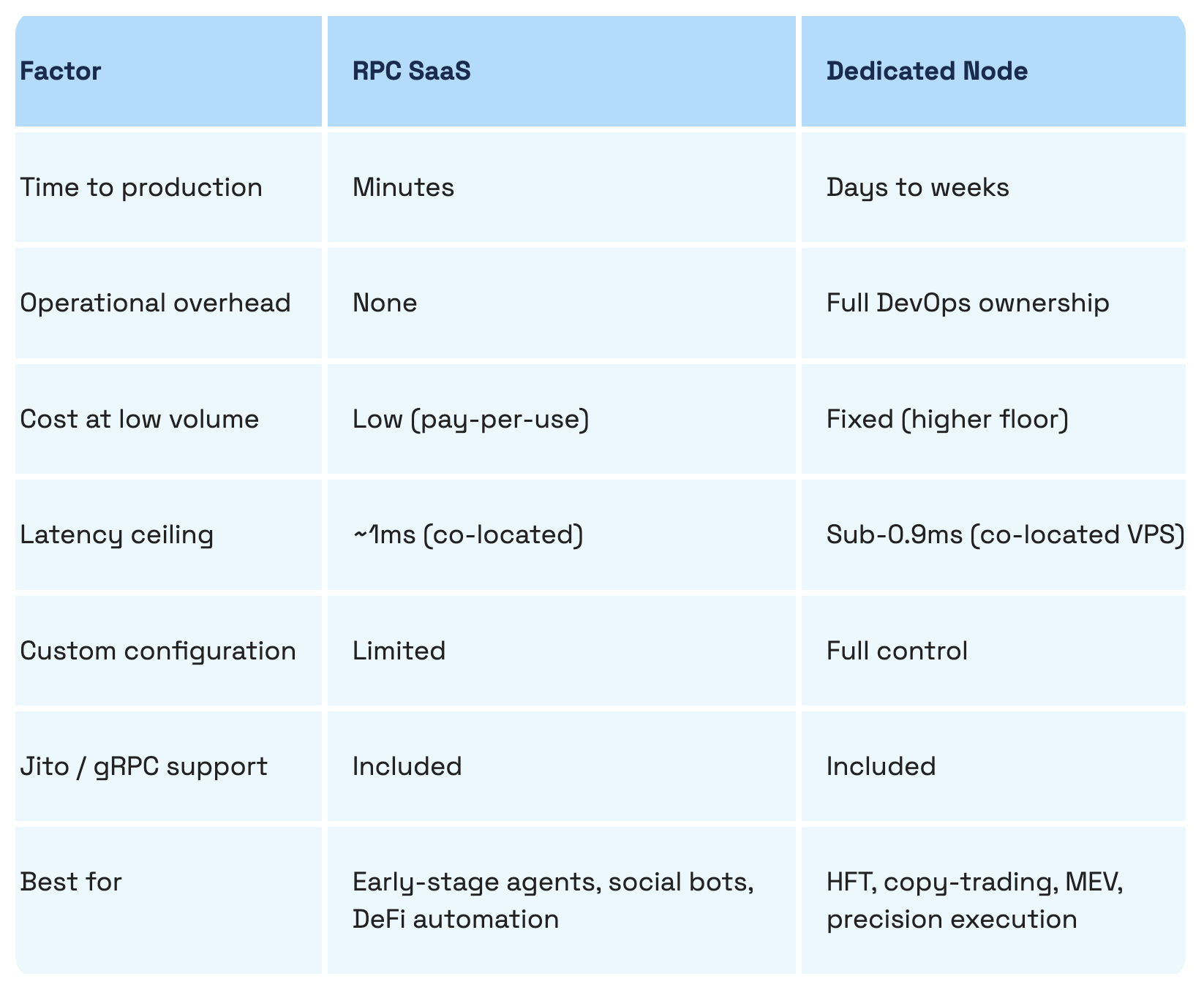

Public Solana RPC looks fine until it isn’t. It’s rate-limited, often far from validator hubs, and shared with everyone else. When the network heats up—a Pump.fun launch or a liquidation wave—those endpoints are first to fall behind. Your transactions don’t land. The spread closes without you.

A production setup has different requirements:

getAccountInfo, getLatestBlockhash, simulateTransaction. You’re competing on time, not correctness.One Dysnix micro-case: moving a market-making bot from public RPC to a dedicated, region-aligned endpoint with Jito bundles cut failed submissions from 18% to under 2% during peak slots, and improved time-to-land by ~120–180 ms. That delta shows up directly in PnL.

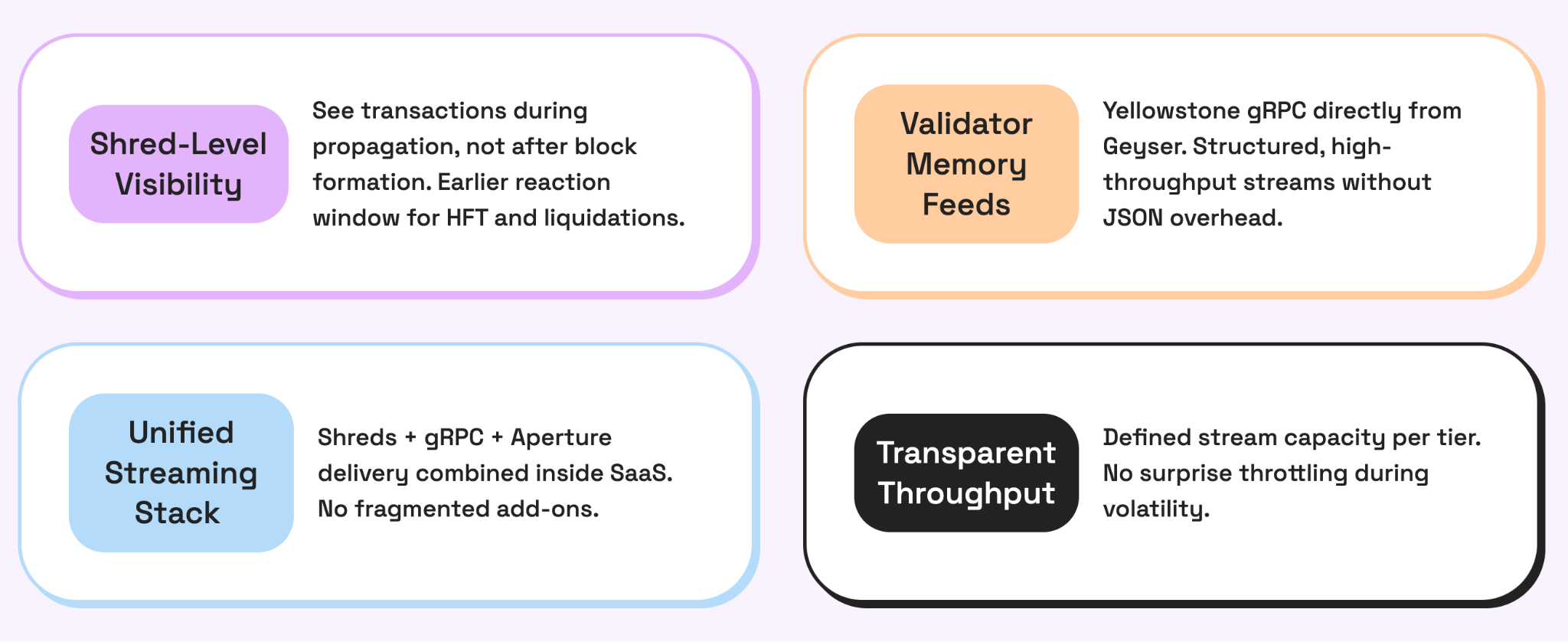

RPC Fast's RPC SaaS tier provides you with a reliable, production-grade Solana endpoint in just minutes—that's support for Jito Bundle, Yellowstone gRPC, and coverage in both EU and US regions.

Launch RPC SaaS for free and connect your agent in one line.

For teams developing precision execution systems, the dedicated node path offers a complete stack: co-location in Frankfurt or US East, Jito ShredStream, Yellowstone gRPC, and direct access to the node's JSON-RPC, all without shared infrastructure with the validator network.

Benchmarks don’t matter until they line up with how your bot loses money.

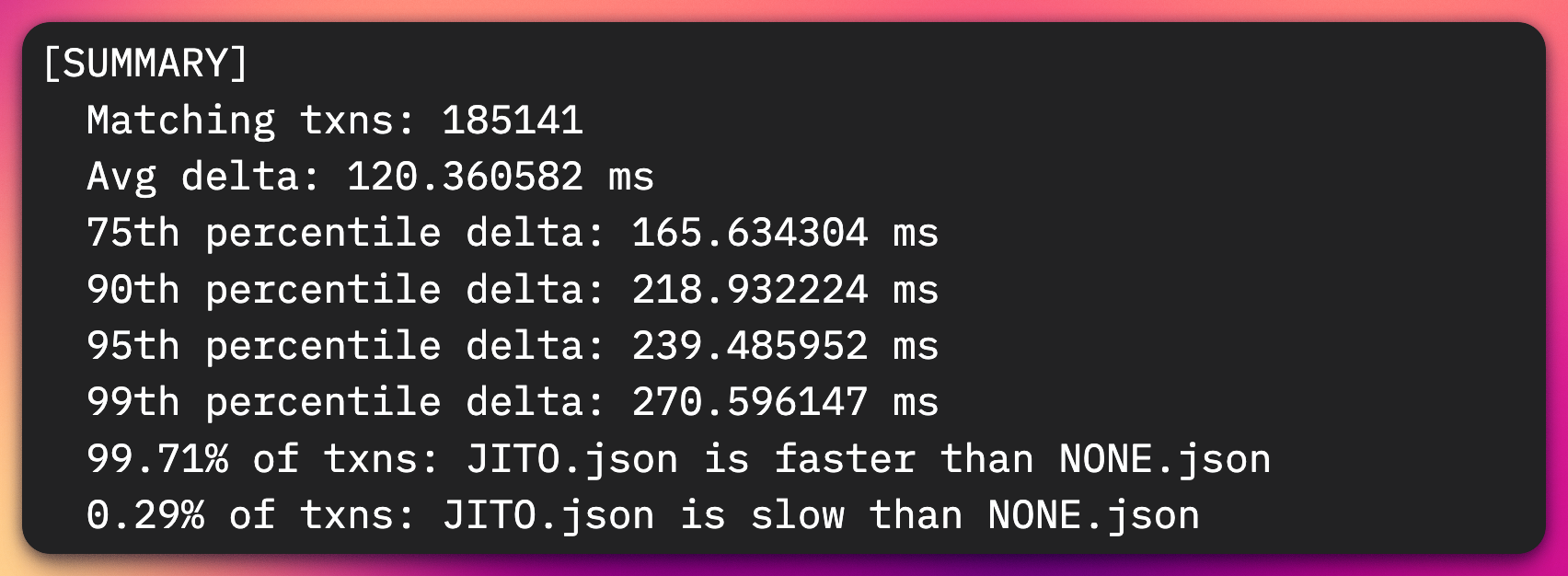

RPC Fast published numbers on Jito ShredStream: transactions arrive ~120.36 ms earlier on average, with a 99th percentile edge of 270.6 ms across 185,141 matched transactions. In a quiet network, that’s trivia. Under load, that’s the difference between first and forgotten.

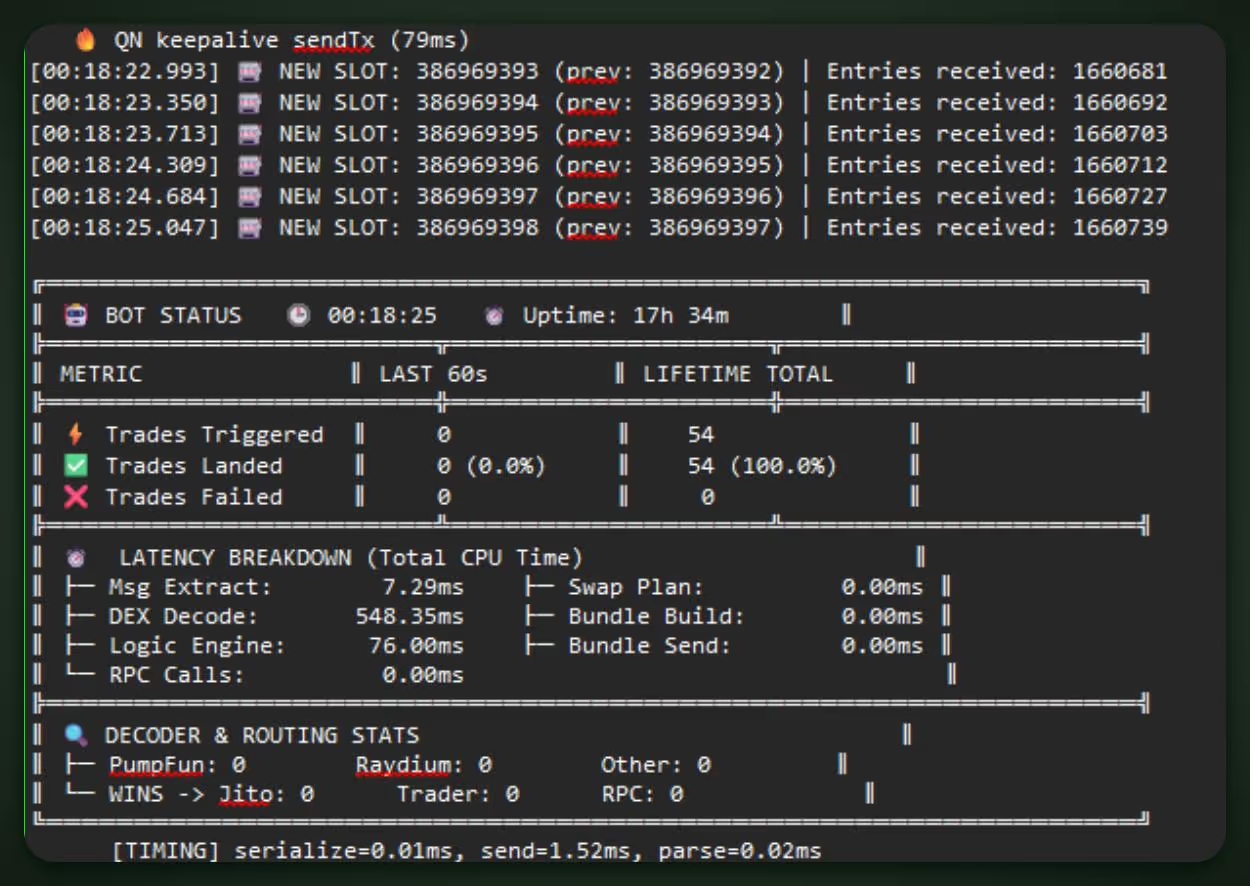

A concrete run: a Rust copy-trading agent, co-located with a Frankfurt node, hit 15 ms best-case landing and kept same-slot or +1 slot execution against KOL wallets. In a 100,000-call stress test, the node stayed under 1 ms response time with no rate limiting. That’s what stable infra looks like when the tape speeds up.

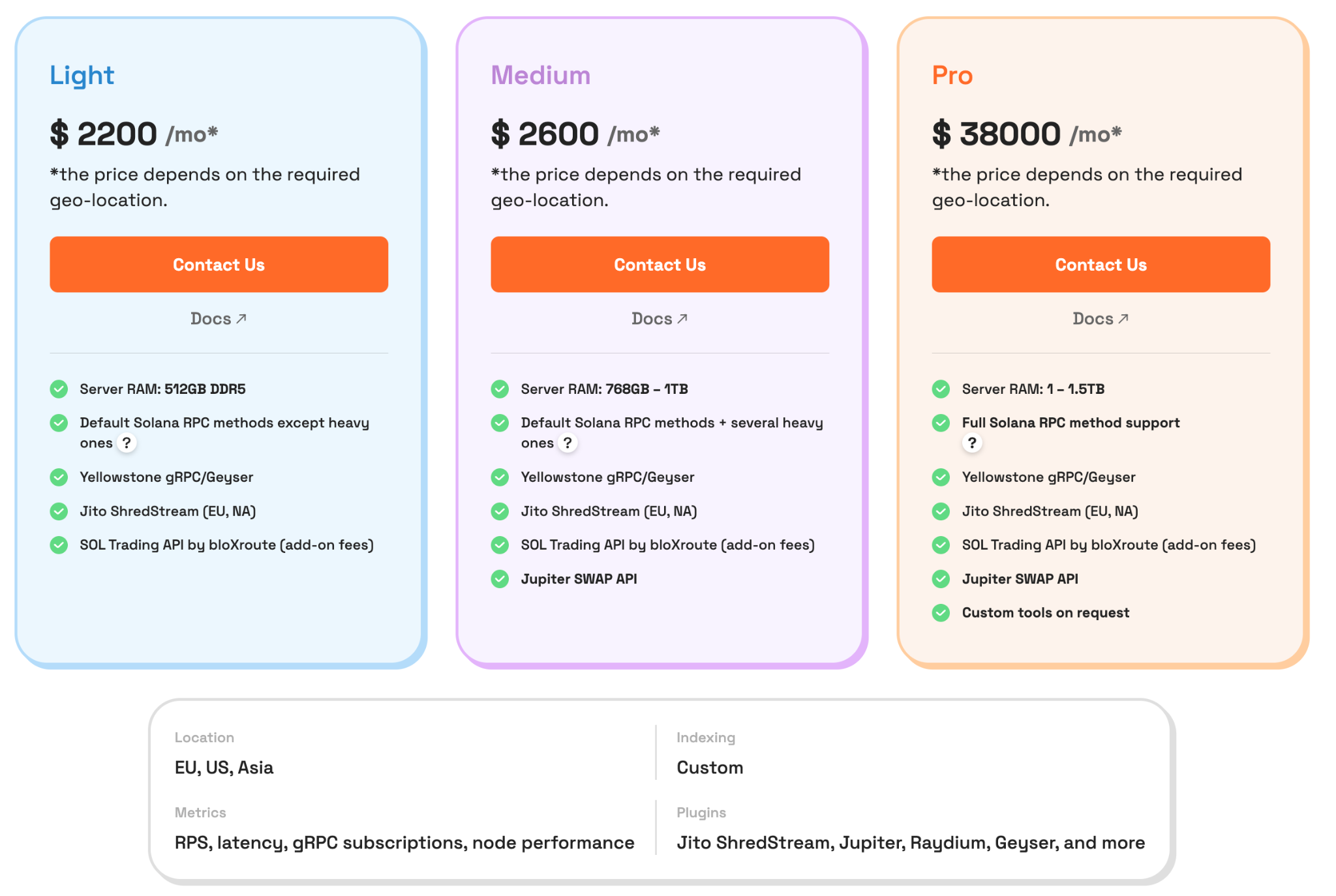

Now the part people overcomplicate: sizing. RPC Fast splits dedicated nodes into three tiers:

getAccountInfo and simulateTransaction. Typical yield optimizers live here.getProgramAccounts at scale for pool scans and state indexing. Copy-trading systems tend to land here.

The complete tier breakdown connects each Solana RPC method to the hardware that consistently supports it.

If your infrastructure is an afterthought, you aren’t running an agent; you’re running a simulation that pays fees to faster people.

And if you need another pair of eyes to analyze your current setup, Dysnix and RPC Fast experts are ready to help you in no time.

Build faster with private RPC by Dysnix

Dedicated endpoints, gRPC, and raw streams for trading, AI agents, and dApps.

Test for free

.jpg)